You can't fork a robot (yet)

Reproducibility is all you need and a Git for robots

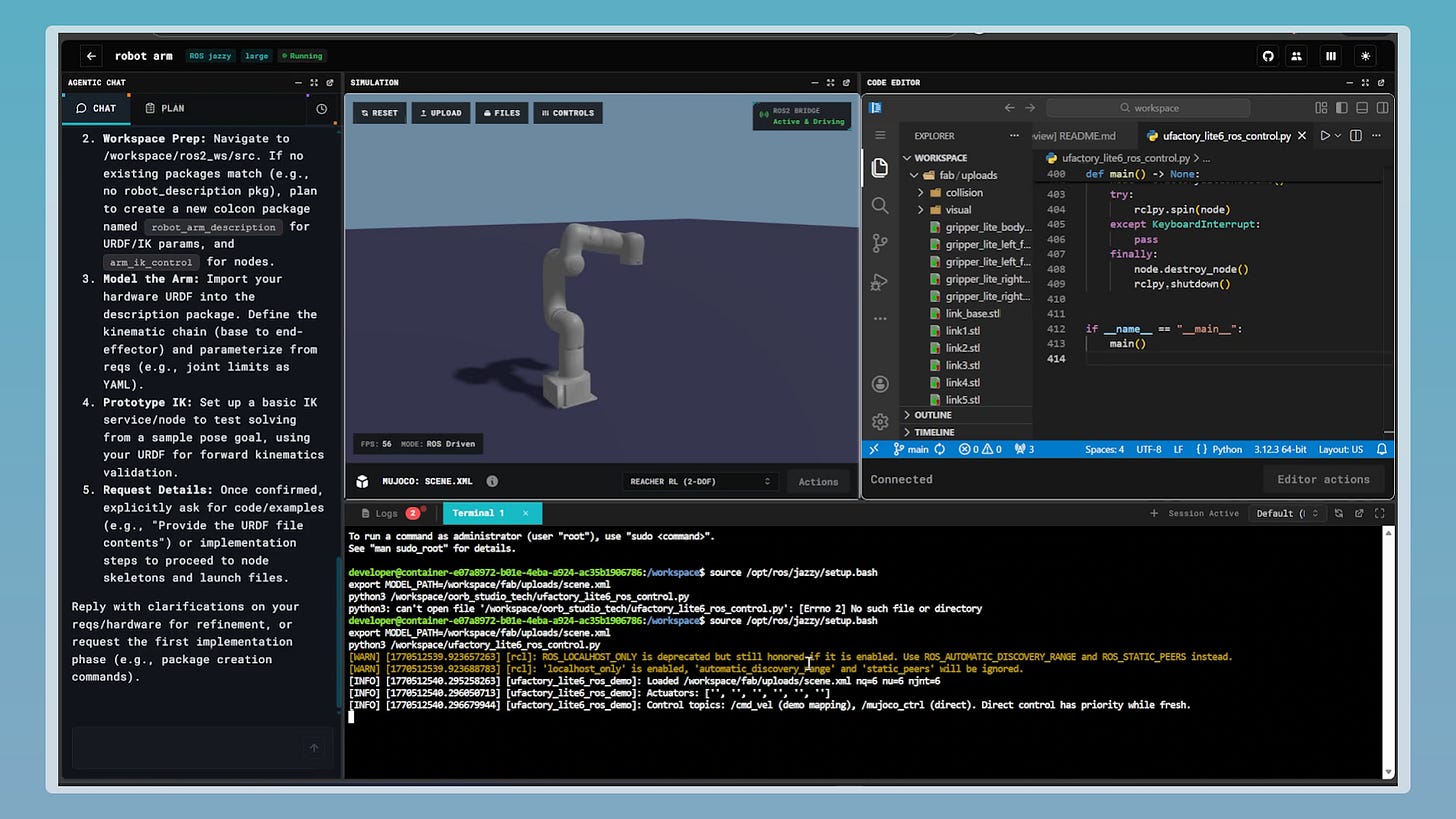

I first met Kayoum as he was launching OORB, a new generation full-stack robotics development environment. We spent some time riffing on what the new Git for robotics may look like and poured everything in this article.

He’s a young, talented and gritty founder, and I’m beyond grateful to have the chance to spar with him on something so consequential.

I’m going to go out on a limb and say that the dirty secret of many (if not most) robotic founders is that their development environment is held together with (sometimes literal) duct tape.

A typical robotics team in 2026 juggles CAD software, a simulation platform, ROS nodes, YAML configuration files, hardware drivers, and a deployment pipeline. All of this is often stitched together by one or two engineers who understand how the pieces connect. When that person leaves, the stitching usually unravels.

In software, we solved this problem a long time ago. Git gave us version control. Docker gave us reproducible environments. CI/CD gave us automated testing and deployment. A new engineer can clone a repo, run a command, and have a working copy of the entire application on their laptop in just a few minutes. The whole industry runs on this infrastructure and we barely think about it anymore because it just works.

In robotics, we can’t reliably fork behavior. You can fork the code, sure. But the code is maybe 30% of what makes a robot work. The other 70% is the hardware configuration, the simulation environment, the trained model weights, the sensor calibration, the physical workspace setup, the deployment-specific tuning that someone did at 2am on a customer’s site and never documented. None of that lives in Git. Most of it doesn’t live anywhere reproducible at all.

Talking to founders building robots, I noticed that even the ones fully bought into NVIDIA’s stack (which is supposedly the most complete one) still spend an absurd amount of engineering time on integration plumbing. While their Isaac Sim is a really powerful tool, getting your specific robot - with your specific sensors, your specific actuators, your specific workspace geometry - into a simulation that accurately predicts real-world behavior is still a multi-week effort. And when you change something in hardware, you’re often re-doing large chunks of that work.

The problem isn’t that the tools don’t exist. It’s that they don’t talk to each other in a way that preserves the full state of a robotics project.

Think about what “state” means for a robot:

The mechanical design (URDF/MJCF files, CAD models)

The software stack (ROS nodes, control algorithms, perception pipelines)

The simulation environment (physics parameters, scene geometry, domain randomization settings)

Trained model weights and the datasets they were trained on

Hardware-specific calibration (camera intrinsics, joint offsets, force-torque baselines)

Deployment configuration (network setup, safety zones, operational parameters)

In software, all of these equivalents live in version-controlled, reproducible artifacts. In robotics, they’re scattered across different tools, different file formats, different machines, and different people’s heads.

Why does this matter now?

First, the open-source robotics ecosystem is having a cambrian explosion: Hugging Face is doing for robotics what it did for NLP - democratizing access to models, datasets, and training pipelines. LeRobot has made it possible for a graduate student with a $2,000 budget to train a manipulation policy that would have required a $200,000 lab setup three years ago. There are a bunch of other great open hardware projects out there and the number of people who can build robots is growing fast. Nonetheless the number of people who can build robots reliably and reproducibly isn’t keeping pace - because the tooling doesn’t enforce reproducibility.

Second, AI coding tools are transforming how robotics software gets written. I’m seeing founders use Claude, Cursor, and Copilot to generate ROS nodes, write simulation configs, even draft control algorithms. Code generation is getting remarkably good. But the generated code still has to be manually integrated into a fragile, undocumented development environment. It’s like having a brilliant architect who can design any building you want but you still have to carry every brick by hand.

The combination is creating a strange moment: although it’s never been easier to write robotics code it has never been harder to manage the full lifecycle of a robotics project.

What “forking a robot” would actually look like

Imagine this: you find an open-source project for a mobile manipulator that picks items in a warehouse. You want to adapt it for your use case - picking parts in a machine shop. In software terms, you’d fork the repo, modify what you need, and run it.

In a world with proper robotics development environments, forking would mean:

You clone the project and get everything: the robot description, the simulation scene, the trained models, the deployment configs, the test suite

You swap in your hardware specs - different arm, different gripper, different mobile base - and the simulation environment auto-updates to reflect the changes

You modify the workspace geometry to match your machine shop, run the simulation, and the system tells you which trained behaviors transfer and which need retraining

You retrain the policies that need updating, using a combination of the original dataset and your new environment

You deploy to your physical robot with a few commands, and the system tracks exactly which version of every component is running

You can truly iterate on what’s not working now, and it took only a few hours to set everything up.

We’re nowhere close to this today. But the pieces are starting to appear.

NVIDIA’s Omniverse is arguably the most complete simulation layer. Hugging Face and LeRobot are building the model and dataset infrastructure. ROS 2 provides a somewhat standardized software framework (although most developers complain, and I would not bet on ROS staying the most relevant framework in the next 5 years). Tools like MoveIt and Nav2 handle motion planning and navigation.

What’s completely missing is the connective tissue - the layer that versions and orchestrates all of these together.

Who will build that connective tissue?

This is where it gets interesting from an investment perspective.

In the software world, the developer tools ecosystem became enormous. GitHub, Docker, Jenkins, Terraform, Kubernetes - billions of dollars of value created by tools that help developers manage complexity.

I think these success stories should make us reflect on a bunch of possible scenarios:

Path 1: NVIDIA extends downward. They already own simulation and are pushing into training and deployment. They could build the orchestration layer that ties their tools together into a complete development environment. The risk for builders: deeper lock-in to a single vendor’s stack. The risk for NVIDIA: it’s not their core competency, and developer tools require a very different product culture than GPU sales (although everything seems easy with $60B+ of free cash flow per year)

Path 2: The open-source community builds it. This is the LeRobot / Hugging Face bet. Standardized formats, open protocols, community-maintained tooling. It’s how software development tools evolved (thanks Linus Torvalds). The challenge: robotics has too many hardware variants and the community, while growing, is still small compared to software.

Path 3: A new category of startup builds “GitHub for robotics.” A platform that versions not just code but the full robot state - hardware configs, simulation environments, trained models, deployment parameters. Something that makes the robotics development lifecycle as reproducible and collaborative as software development already is.

I’m most excited about path 3 with elements of path 2. There is something powerful about tooling built by people that feel the pain most acutely and robotics engineers are tired of spending time rebuilding / tweaking their environments every time something changes.

Path 3 also allows for network effects at the component level: the subset of components that get integrated into a brand-new platform, automatically becomes a better candidate for dominance in their use case.

What would get me excited:

Reproducible environments as a primitive. Every project should be fully described by a set of versioned artifacts that can be instantiated on any compatible machine. Not just the code - the full stack down to simulation parameters and hardware configs.

Hardware abstraction that actually works. The ability to swap robot components and have the rest of the stack adapt. This is brutally hard, but it’s the key unlock. Today, changing a sensor often means rewriting perception pipelines, re-tuning controllers, and rebuilding simulation environments.

Simulation-to-real as a managed pipeline, not a prayer. The sim-to-real gap is real - I hear it from every founder. A development environment that tracks sim-to-real transfer performance and helps engineers systematically close the gap would be transformative.

Collaborative development. Multiple engineers working on the same robot project without stepping on each other’s toes. In software, this is table stakes. In robotics, it’s still rare.

Deployment as a first-class operation rather than an afterthought. The development environment should know about the target deployment environment and flag incompatibilities before you ship hardware to a customer site.

Fix the development environment and you accelerate everything downstream.

Faster iteration → better robots.

Better reproducibility → more reliable deployments.

Easier collaboration → more people building.

More builders mean → deployed robots.

More deployments → more data and deployment experience flowing back to improve the next generation.

Someone will build the development environment that makes robotics engineering as fluid as software engineering. And when they do, it’ll unlock a step change in how fast the entire industry moves.

You can’t fork a robot yet. But the team that makes it possible will be building one of the most important pieces of infrastructure in robotics.

If you’re building devtools for robotics, ping me at gabriele@foundamental.com