Capability vs. Deployability

When does the deployment age of robotics foundation models start?

I met Chris as a true fan of his It Can Think! newsletter and Robopapers series.

He’s become one of the most prolific technical voices in the robotics community and I’ve had the chance to spar with him on the gaps between the current state of RFMs and their deployability.

We put our thoughts together in this article.

Robotics Foundation Models are making headlines with incredible demos, and huge funding rounds.

Here’s a rudimentary and PhD-infuriating introduction to what RFM are.

While I (Gabriele) have been obsessing about what makes robots a no-brainer deployable option for customers in traditional industries, Chris has been diving very deep with the best technical minds on how we can leverage RFM to make the new generation of robots truly capable.

I want to zoom in the difference between the two problems:

A robot is capable when it reliably solves the task in a somewhat controlled environment and clocks good performance through some sort of evaluation framework. At this point of its journey, its developers have proven that the form factor is the right one for the task and that the accuracy of its controls doesn’t degrade too much when you input some noise/variance into its environment.

A robot becomes deployable when it fails gracefully in the real world, can be maintained by people who didn’t build it, can maintain the promise of a 99.5% uptime SLA and the unit economics work at the volume your first customer can actually buy.

Most of the demos we are seeing online these days show very capable robots - solving tasks we didn’t think were solvable before end-to-end models came about (eg. laundry folding). However, this capability often comes at a cost in reliability – meaning that such robots might not actually be able to deploy in the real world.

Deployable robots, instead, are less likely to make headlines, and the reason seems to be that - today - this class of robots is still doing mundane tasks like moving material from A to B, pulling/towing something autonomously, printing discrete floor plans on the ground. Often these tasks aren’t even done autonomously and in order to generate and ROI for customers they may not even need to be autonomous. They are just Robots That Work.

Robotics Foundation Models are showing incredible steps forward to make robots capable, but are still lagging behind when it comes to deployability.

Let’s try to zoom in and understand why.

RFM and the deployability gap

Robotics foundation models are non-deterministic. They generate actions through probabilistic inference over learned distributions rather than executing hand-coded logic, but their input space is extremely-high-dimensional. What this means is that, even after learning in simulation, the robot won’t have seen some little corner case of the real world version of the problem and the behavior will degrade. If carefully engineered, the robot can have more “tunnel vision” and only respond to relevant stimuli - so that its actions’ outcomes become easier to predict. Doing so, obviously takes a bite at its capability set.

The potential upside, however, it’s quite enticing. RFM may hold the key to generalized robot intelligence, when paired with the right embodiment.

If the bet is right, in X years we may see something like a single “factory robot” doing everything that a welding / material movement / machine tending / palletizing / assembly / dispensing robot can do today.

However, we’re nowhere near this today:

Interpretability of the model’s actions is still quite poor, which means that the isk of downtime if a policy fails is very high. Interpretability matters because downtime is expensive and you can’t diagnose (and fix) what you can’t understand.

Teleoperation intervention may solve the problem practically but damages the unit economic of the deployments. It is also often infeasible: teleoperating a robot means having very-low-latency connection at all times and handling teleop’s own failures;

Industrial customers prioritize safety and reliability over capability. Much of what is being adopted now was already possible 20 years ago with industrial robots or 10 years ago with line-following AMRs.

In the end, the ultimate benchmark is how well, reliably and economically your robot performs in the real world.

For great innovations to become products, you need economic and operational viability: from a single laundry-folding demo to a robot that folds laundry 8 hours a day, 6 days a week, with a 99.5% uptime SLA, near-zero liability risk and a payback period under 10/12 months.

Trivially but very importantly you have to make a case for increased throughput and - at least for now - new AI methods are way slower than either old-school deterministic control robots or humans themselves.

So what may the bottlenecks be to make these models converge towards deployability? Almost everything comes down to poor data coverage.

Poor data at deploy time

Humans make decisions using a much broader temporal window than current robots. We remember where we put something five hours ago, and notice if a drawer is slightly more open than usual. We essentially integrate information from memory, touch, sound, and peripheral vision automatically.

Robots, and the models that run them, mostly don’t. They work primarily from what the camera sees right now, with a limited context window. In a well-characterized, stable environment that’s fine but in the messy reality of real deployments partial observability is a constant source of failure.

You can address this with more data and longer context windows, but the cost in compute and training data is steep. It’s tractable, but not yet solved.

Robots are also largely missing a key sense to do things in the physical world. Humans grab things and move objects around without looking or by using touch, which most robots don’t yet have. Rodney Brooks wrote a great post about this.

Poor task coverage

The failure mode isn’t just “I haven’t seen this object before” but rather “I haven’t seen all the ways I might need to move my hand to interact with this object in this configuration in this environment.” The space of real-world physical interactions is enormous, and the data we have covers it in discrete islands, not a continuous field.

There is information that transfers across tasks and environments. The manipulation priors a model builds from seeing millions of human-object interactions carry some signal into new domains. Nonetheless, humans are radically more efficient learners than current robots in part because of the priors we bring with us from our entire physical life up to that point in time.

What makes new learning research exciting is that it is a way to explore only in the right places, to make robot learning work more like human learning rather than brute-force data collection.

These two gaps intertwine. Poor deployment data + poor task data = no uncertainty awareness

Again, RFMs learn everything they know from a tiny fraction of the entire solution space.

Not only does this mean that they fall easily in out-of-distribution situations, but also that it’s extremely hard to compute uncertainty measures without good data coverage. Current data availability is clustered around a bunch of discrete islands, which means that you don’t have a support basis to estimate confidence levels of the next action

Like with LLMs, models are also overconfident, and follow through their mistakes

Integrating your way to deployability

By looking at a lot of these demos, we may get the sense that once the model gets smart enough, and accuracy rates increase, you may finally have a drop-in “hardware laborer” to deploy in your facility.

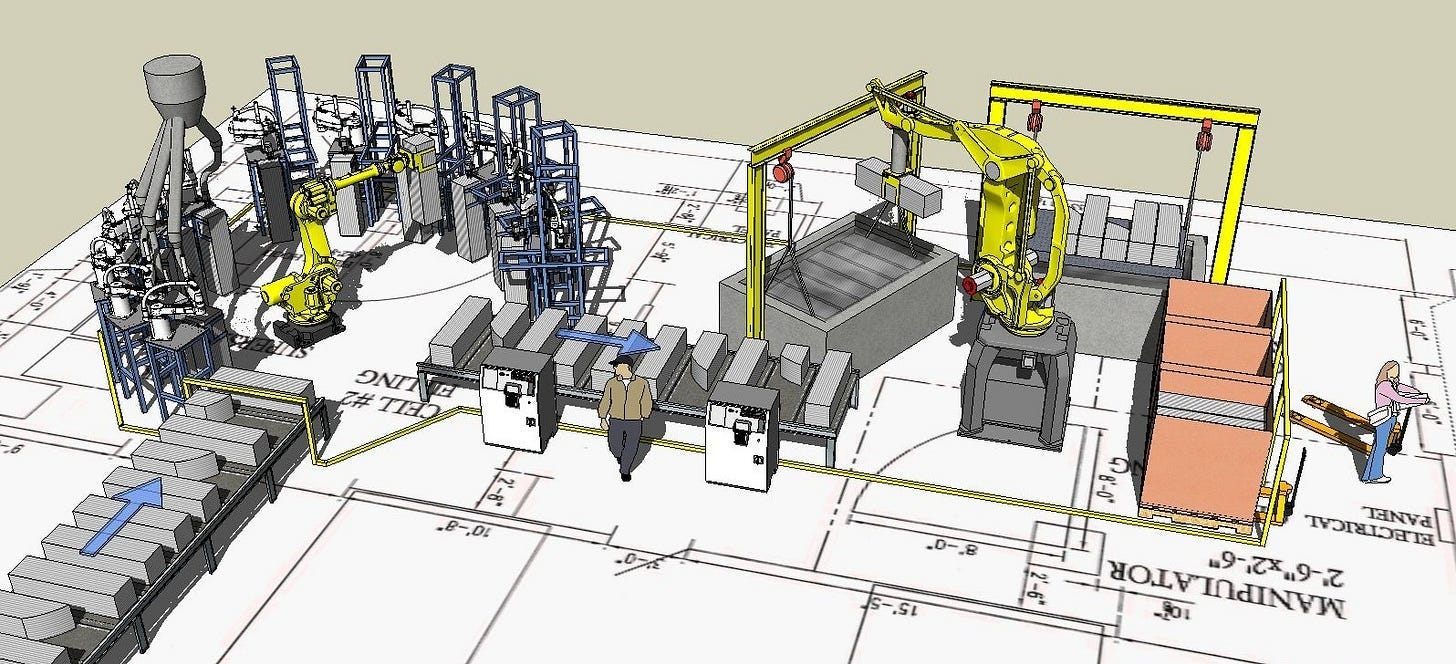

This is likely to be just wishful thinking: a key bottleneck to deployment is and will remain electrical / wiring works, facility re-layouting and robot-adjacent infrastructure to avoid that robots get stuck by physical constraints of an environment previously designed for humans. And yes, for many use cases, that also matters for humanoids.

A factory for robots looks very different from a factory for humans.

Today, mobile robots may make some of this easier but this usually comes at the cost of throughput: without re-layouting your facility for indoor robotic transport of material, you’re not getting the most out of the solution and your accountant may tell you that it’s not even worth adopting that AMR after all.

This is why system integration - who holistically manages the complexity of integrating automation in your facility - will remain of paramount importance. If anything, RFMs may increase the integrator’s performance in the near term, because the models’ uncertainty has to be managed by someone, and that someone will be the integrator holding the SLA.

That sparks the question: are today’s integrators equipped to be the ones deploying RFM-powered machines?

On one side, it’s a product packaging/readiness question: can legacy integrators source RFM-powered robots that (1) have an abstracted-enough UI/UX to allow a non-PhD-roboticist (as 99.5% of them are) manage failure modes and (2) can cross the threshold of the classic manufacturer disbelief (“this has worked for others, but it won’t work for me”)

The lack of software skills (better, AI/ML software skills) within legacy integrators may prove to be a big drag on this scenario. Maybe a new class of software-first integrators can make this happen?

Model companies may evolve to become the integrators for their own solutions. They know best how to tame their model. Companies like Skild and Physical Intelligence may follow the RedHat playbook and, while continuing to open source their work, they may become the ones managing complexity for a certain class of end customers (eg. enterprises with large deployment pipelines, operators with very complex automation lines).

This comes with the burden of holding SLAs, owning operational capacity on the ground and managing the complexity of having to deliver an outcome (ie. robot doing the thing correctly 99% of the time). This will likely clash with the math around margins and exit multiples that convinced early stage investors to pour billions in their early rounds.

It’ll be interesting to see how this shakes out and how RFM-powered robotics gets deployed.

Conclusions

Something is already moving.

A few absolutely-not-exhaustive examples are Ultra and Weave partnering with PI for their early commercial deployments and Sunday’s new motto “No more demos”.

I remain convinced that most of the near-term value of robotics still lies in the boring automation that is progressively crossing the threshold of deployability. Although these machines won’t suffice as moats to build huge companies, their deployment will be the real value generator. The value accrues at the service layer.

I’ll be writing about this soon.

Yet, understanding the bleeding edge of robotics models will remain very important given its immense expected return ( = probability times payoff).

Although probability of general deployable robots right now seems low, the payoff tends to the $ value of the entirety of human labor.

The gap between capability and deployability is effectively a systems design problem.

"Teleoperation intervention may solve the problem practically but damages the unit economic of the deployments" - Perhaps there is some in-between paradigm where the robot takes general guidance from a human and executes it (or specific guidance at critical times), freeing a human operator to tend to multiple robots at once.

I suppose this is sort of how automotive or even airplane autopilots work today. Generally they do their thing, but need a human to step in at critical times. If the human is looking down from a factory control tower (or whatever) to step in as needed, there might be a delay at times, but one operator could take care of many robots.